Data Science Team

Note: This documentation is also available in a rendered format here.

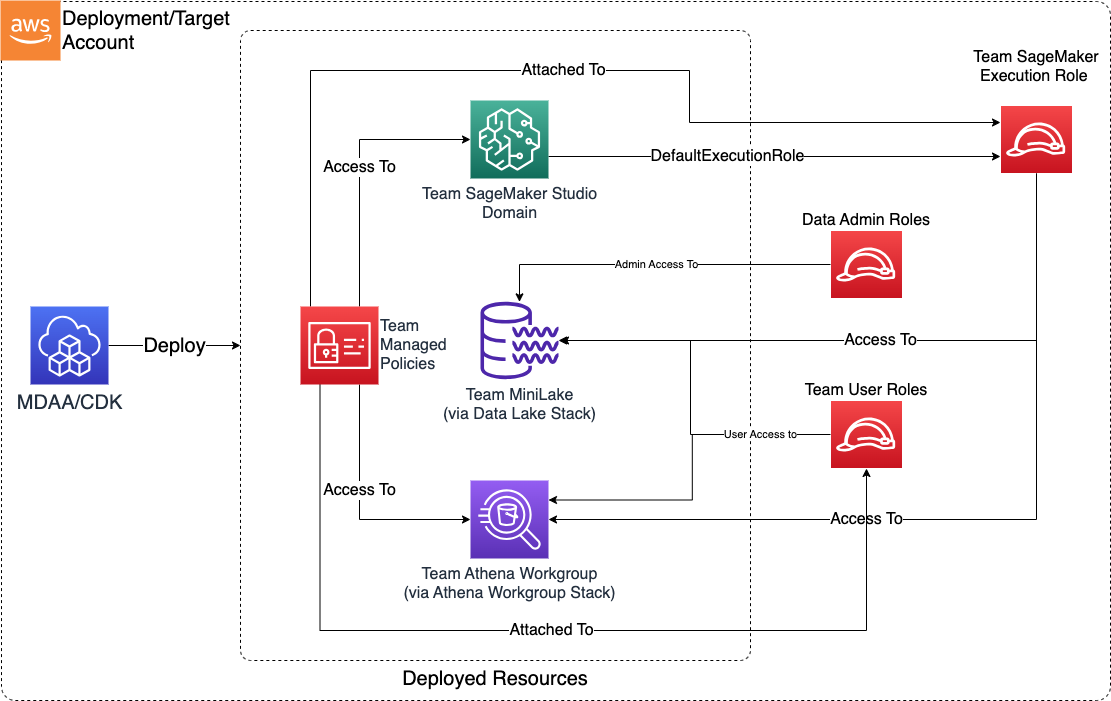

Provisions a complete data science team environment including SageMaker AI Studio domains with user profiles, Athena workgroups, team S3 data buckets, KMS encryption, Lake Formation permissions, and IAM role management. Supports both IAM and SSO authentication modes with configurable security guardrails. Use this module when you need to onboard a data science team with a ready-to-use ML workspace, shared storage, and governed data access in a single deployment.

Deployed Resources

This module deploys and integrates the following resources:

Team Mini Lake and KMS Key - An S3-based mini data lake which the team can use as a persistence layer for their activities. Deployed using the Datalake KMS and Buckets L3 Construct.

Team Athena Workgroup and Results Bucket - An Athena Workgroup for use by the team, with a dedicated S3 bucket for query results. Deployed using the Athena Workgroup L3 Construct.

SageMaker AI Studio Domain and User Profiles - SageMaker AI Studio Domain configured to use the Team Execution Role, with optional user-specific User Profiles. Deployed using the Studio Domain L3 Construct.

SageMaker Read/Write Team Managed Policies - IAM managed policies providing read, write, and guardrail access to SageMaker. Policies are automatically added to team execution and mutable team user roles; immutable roles (such as IAM Identity Center/SSO roles) require manual policy binding via SSO permission set.

Related Modules

- SageMaker Studio — Deploy a standalone Studio domain when you need Studio without the full data science team environment

- SageMaker Notebooks — Deploy standalone SageMaker notebook instances as an alternative to Studio

- Data Lake — Deploy data lake buckets that the team can access via Lake Formation permissions

- Athena Workgroup — Deploy standalone Athena workgroups; this module provisions team workgroups automatically

- Roles — Create IAM roles that can be referenced as team user or data admin roles

- Lake Formation Access Control — Manage fine-grained Lake Formation grants for team access to data lake resources

Security/Compliance Details

This module is designed in alignment with MDAA security/compliance principles and CDK nag rulesets. Additional review is recommended prior to production deployment, ensuring organization-specific compliance requirements are met.

- Encryption at Rest:

- Team S3 buckets encrypted with team-specific customer-managed KMS key

- SageMaker resources encrypted with team KMS key

- Athena query results encrypted with team KMS key

- Encryption in Transit:

- All SageMaker and Athena communications use TLS

- Least Privilege:

- Separate read, write, and guardrail managed policies for SageMaker

- Team bucket access restricted to team execution role, data admin roles, and team user roles via bucket policy

- Results bucket access limited to team execution role, team user roles, and data admin roles

- Workgroup access granted via IAM managed policy to team execution role and mutable team user roles

- Separation of Duties:

- Guardrail policy enforces security parameters on SageMaker resource creation

- Team execution role requires explicit SageMaker service trust

- Lake Formation permissions control team access to data lake resources

- Network Isolation:

- SageMaker AI Studio domain is VPC-bound with configurable security group ingress/egress rules

- Direct internet access disabled

AWS Service Endpoints

The following VPC endpoints may be required if public AWS service endpoint connectivity is unavailable (e.g., private subnets without NAT gateway, firewalled environments, or PrivateLink-only architectures):

| AWS Service | Endpoint Service Name | Type |

|---|---|---|

| SageMaker API | com.amazonaws.{region}.sagemaker.api |

Interface |

| SageMaker Runtime | com.amazonaws.{region}.sagemaker.runtime |

Interface |

| SageMaker Studio | com.amazonaws.{region}.studio |

Interface |

| Athena | com.amazonaws.{region}.athena |

Interface |

| Glue | com.amazonaws.{region}.glue |

Interface |

| KMS | com.amazonaws.{region}.kms |

Interface |

| S3 | com.amazonaws.{region}.s3 |

Gateway |

| CloudWatch Logs | com.amazonaws.{region}.logs |

Interface |

| STS | com.amazonaws.{region}.sts |

Interface |

| SSM Parameter Store | com.amazonaws.{region}.ssm |

Interface |

| Lake Formation | com.amazonaws.{region}.lakeformation |

Interface |

| EFS | com.amazonaws.{region}.elasticfilesystem |

Interface |

Configuration

MDAA Config

Add the following snippet to your mdaa.yaml under the modules: section of a domain/env in order to use this module:

datascience-team: # Module Name can be customized

module_path: '@aws-mdaa/datascience-team' # Must match module NPM package name

module_configs:

- ./datascience-team.yaml # Filename/path can be customized

Module Config Samples and Variants

Copy the contents of the relevant sample config below into the ./datascience-team.yaml file referenced in the MDAA config snippet above.

Minimal Configuration

Deploys a SageMaker Studio domain with IAM auth, team S3 data lake, and Athena workgroup. Start here for a quick team environment with sensible defaults before customizing user profiles or lifecycle configs.

# Contents available via above link

# Minimal config for the Data Science Team module.

# Deploys a SageMaker Studio domain with IAM auth, team S3 data

# lake, and Athena workgroup.

team:

# See CONFIGURATION.md for role reference options (name, arn, id).

# Admin roles granted access to team resources including KMS keys,

# S3 buckets, and SageMaker resources.

dataAdminRoles:

- name: Admin

# Execution role for SageMaker workloads. Must have

# sagemaker.amazonaws.com service trust.

teamExecutionRole:

name: team-execution-role

# (Optional) SageMaker Studio domain — the module's primary

# resource. Without this, only S3/Athena are deployed.

studioDomainConfig:

# Authentication mode (enum: IAM, SSO)

authMode: IAM

# VPC ID for Studio domain deployment

# Often created by your VPC/networking stack.

# Example SSM: ssm:/path/to/vpc/id

vpcId: vpc-id

# Subnet IDs for Studio user applications

# Often created by your VPC/networking stack.

# Example SSM: ssm:/path/to/subnet/id

subnetIds:

- subnet-id

# Admin roles for domain management

dataAdminRoles:

- name: Admin

# At least one user profile

userProfiles:

example-user-id:

userRole:

name: team-execution-role

Comprehensive Configuration

Provisions a SageMaker Studio domain with IAM auth, user profiles, lifecycle configs, custom images, S3 mini data lake with inventory, and team access controls for collaborative ML development. Use this as a reference when you need full control over user profiles, lifecycle scripts, storage policies, and team-level access controls.

sample-config-comprehensive.yaml

# Contents available via above link

# Sample config for the Data Science Team module.

# Provisions a SageMaker Studio domain with IAM auth, user profiles,

# lifecycle configs, custom images, S3 mini data lake with inventory,

# and team access controls for collaborative ML development.

#

# This is the comprehensive configuration demonstrating all compatible

# properties with IAM authentication mode. For SSO auth mode, see

# sample-config-sso.yaml.

# Complete data science team infrastructure configuration. Defines

# SageMaker Studio domain, S3 mini data lake, Athena workgroup,

# execution roles, and user profiles.

team:

# See CONFIGURATION.md for role reference options (name, arn, id).

# Admin roles granted access to team resources including KMS keys,

# S3 buckets, and SageMaker resources. Roles can be referenced by

# name, arn, or id.

dataAdminRoles:

- name: Admin

# (Optional) Team member roles for accessing shared resources like

# data lake, SageMaker Studio, and collaborative tools.

teamUserRoles:

- id: generated-role-id:data-scientist

# Immutable roles (e.g. SSO roles) are provided access only to

# the team bucket and KMS key via resource policies.

- name: AWSReservedSSO_datascientist_abcdefg

immutable: true

# Execution role for SageMaker workloads including training jobs,

# endpoints, and notebooks. Must have sagemaker.amazonaws.com

# service trust with sts:AssumeRole and sts:SetSourceIdentity.

teamExecutionRole:

id: generated-role-id:team-execution-role

# (Optional) Custom policy name prefix for portable naming across

# accounts with SSO integration. When set, uses this prefix

# instead of the naming module for policy names.

verbatimPolicyNamePrefix: 'some-prefix'

# (Optional) S3 inventory configurations for team data lake

# bucket content analysis and governance.

inventories:

team-inventory:

# S3 prefix to include in the inventory report

prefix: 'data/'

# (Optional) Destination bucket for inventory reports.

# Defaults to the source bucket under /inventory prefix.

destinationBucket: 'test-inventory-bucket'

# (Optional) S3 prefix within the destination bucket for

# inventory report storage

destinationPrefix: 'inventory-reports/'

# (Optional) AWS account ID owning the destination bucket

# for cross-account inventory delivery

destinationAccount: '{{context:account-2}}'

# (Optional) SageMaker Studio domain configuration for the team's

# collaborative ML development environment.

studioDomainConfig:

# Authentication mode (enum: IAM, SSO)

authMode: IAM

# VPC ID for Studio domain deployment

# Often created by your VPC/networking stack.

# Example SSM: ssm:/path/to/vpc/id

vpcId: vpc-id

# Subnet IDs for Studio user applications

# Often created by your VPC/networking stack.

# Example SSM: ssm:/path/to/subnet/id

subnetIds:

- subnet-id

# (Optional) KMS key ARN for EFS encryption

kmsKeyArn: 'arn:{{partition}}:kms:{{region}}:{{account}}:key/test-efs-key'

# (Optional) Memory limit in MB for lifecycle asset deployment

# Lambda

assetDeploymentMemoryLimitMB: 512

# (Optional) S3 prefix for lifecycle asset storage

assetPrefix: 'lifecycle-assets/'

# (Optional) Default execution role for Studio applications

defaultExecutionRole:

id: generated-role-id:team-execution-role

# (Optional) Admin roles for domain management

dataAdminRoles:

- arn: 'arn:{{partition}}:iam::{{account}}:role/DomainAdmin'

# (Optional) Security group ingress rules

securityGroupIngress:

# (Optional) IPv4 CIDR block rules for security group traffic

# control defining IP address-based access restrictions

ipv4:

# CIDR block specification for network access control

- cidr: 10.0.0.0/24

# (Optional) Description for the rule

description: Allow HTTPS from internal network

port: 443

protocol: tcp

# (Optional) Ending port number for port range rules

toPort: 443

# (Optional) Security group rules for cross-security group

# traffic control

sg:

# Security group identifier for SG-based access control

- sgId: ssm:/ml/sm/sg/id

port: 443

protocol: tcp

# (Optional) Prefix list rules for security group traffic

# control defining managed prefix list-based access

# restrictions

prefixList:

- prefixList: pl-test-ingress

description: Ingress from managed prefix list

protocol: tcp

port: 443

# (Optional) Security group egress rules

securityGroupEgress:

prefixList:

# Prefix list identifier for managed IP range access control

- prefixList: pl-4ea54027

description: prefix list for com.amazonaws.{{region}}.dynamodb

protocol: tcp

port: 443

- prefixList: pl-7da54014

description: prefix list for com.amazonaws.{{region}}.s3

protocol: tcp

port: 443

# (Optional) Ending port number for port range rules

toPort: 443

ipv4:

- cidr: 0.0.0.0/0

port: 443

protocol: tcp

# (Optional) Description for the rule

description: Allow outbound HTTPS

sg:

- sgId: ssm:/ml/sm/sg/id

port: 443

protocol: tcp

# (Optional) S3 prefix for shared notebook storage

notebookSharingPrefix: notebooks

# (Optional) Named user profiles for Studio domain. Key is the

# user identifier: Session Name portion of aws:userid (IAM mode).

userProfiles:

example-user-id:

# (Optional) Required for IAM AuthMode. The role from which

# the user will launch the user profile in Studio.

userRole:

id: generated-role-id:data-scientist

# (Optional) Default user settings for Studio applications

defaultUserSettings:

# (Optional) The kernel gateway app settings

kernelGatewayAppSettings:

# (Optional) A list of custom SageMaker images configured

# to run as a KernelGateway app

customImages:

# The name of the AppImageConfig

- appImageConfigName: 'appImageConfigName'

# The name of the CustomImage

imageName: 'imageName'

# (Optional) The version number of the CustomImage

imageVersionNumber: 1

# (Optional) The default instance type and SageMaker image

# ARN used by the KernelGateway app

defaultResourceSpec:

# (Optional) The instance type

instanceType: 'ml.t3.medium'

# (Optional) The ARN of the SageMaker image

sageMakerImageArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:image/test-image'

# (Optional) The ARN of the image version

sageMakerImageVersionArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:image-version/test-image/1'

# (Optional) The ARN of the Lifecycle Configuration

lifecycleConfigArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:studio-lifecycle-config/test-lcc'

# (Optional) The ARN of the Lifecycle Configurations

# attached to the user profile or domain

lifecycleConfigArns:

- 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:studio-lifecycle-config/test-kernel-lcc'

# (Optional) The JupyterLab app settings

jupyterLabAppSettings:

# (Optional) Indicates whether idle shutdown is activated

# for JupyterLab applications

appLifecycleManagement:

# (Optional) Settings related to idle shutdown of Studio

# applications

idleSettings:

# (Optional) The time that SageMaker waits after the

# application becomes idle before shutting it down

idleTimeoutInMinutes: 60

# (Optional) Indicates whether idle shutdown is

# activated for the application type

lifecycleManagement: ENABLED

# (Optional) The maximum value in minutes that custom

# idle shutdown can be set to by the user

maxIdleTimeoutInMinutes: 120

# (Optional) The minimum value in minutes that custom

# idle shutdown can be set to by the user

minIdleTimeoutInMinutes: 30

# (Optional) The lifecycle configuration that runs before

# the default lifecycle configuration

builtInLifecycleConfigArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:studio-lifecycle-config/test-builtin-lcc'

# (Optional) A list of Git repositories that SageMaker

# automatically displays to users for cloning

codeRepositories:

# The URL of the Git repository

- repositoryUrl: 'https://github.com/example/repo.git'

# (Optional) A list of custom SageMaker images configured

# to run as a JupyterLab app

customImages:

- appImageConfigName: 'jupyterLabAppImageConfig'

imageName: 'jupyterLabImage'

# (Optional) The version number of the CustomImage

imageVersionNumber: 1

# (Optional) The default instance type and SageMaker image

# ARN used by the JupyterLab app

defaultResourceSpec:

# (Optional) The instance type

instanceType: 'ml.t3.medium'

# (Optional) The ARN of the SageMaker image

sageMakerImageArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:image/test-jupyterlab-image'

# (Optional) The ARN of the image version

sageMakerImageVersionArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:image-version/test-jupyterlab-image/1'

# (Optional) The ARN of the Lifecycle Configuration

lifecycleConfigArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:studio-lifecycle-config/test-jupyterlab-default-lcc'

# (Optional) The ARN of the lifecycle configurations

# attached to the user profile or domain

lifecycleConfigArns:

- 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:studio-lifecycle-config/test-jupyterlab-lcc'

# (Optional) The Jupyter server's app settings

jupyterServerAppSettings:

# (Optional) The default instance type and SageMaker image

# ARN used by the JupyterServer app

defaultResourceSpec:

# (Optional) The instance type (JupyterServer apps only

# support the 'system' value)

instanceType: 'system'

# (Optional) The ARN of the SageMaker image

sageMakerImageArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:image/test-jupyter-server-image'

# (Optional) The ARN of the image version

sageMakerImageVersionArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:image-version/test-jupyter-server-image/1'

# (Optional) The ARN of the Lifecycle Configuration

lifecycleConfigArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:studio-lifecycle-config/test-jupyter-server-default-lcc'

# (Optional) The ARN of the Lifecycle Configurations

# attached to the JupyterServerApp

lifecycleConfigArns:

- 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:studio-lifecycle-config/test-jupyter-server-lcc'

# (Optional) A collection of settings that configure the

# RSessionGateway app

rSessionAppSettings:

# (Optional) A list of custom SageMaker images configured

# to run as a RSession app

customImages:

- appImageConfigName: 'rSessionAppImageConfig'

imageName: 'rSessionImage'

# (Optional) The version number of the CustomImage

imageVersionNumber: 1

# (Optional) Specifies the ARNs of a SageMaker image and

# image version, and the instance type

defaultResourceSpec:

# (Optional) The instance type

instanceType: 'ml.t3.medium'

# (Optional) The ARN of the SageMaker image

sageMakerImageArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:image/test-rsession-image'

# (Optional) The ARN of the image version

sageMakerImageVersionArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:image-version/test-rsession-image/1'

# (Optional) The ARN of the Lifecycle Configuration

lifecycleConfigArn: 'arn:{{partition}}:sagemaker:{{region}}:{{account}}:studio-lifecycle-config/test-rsession-default-lcc'

# (Optional) A collection of settings that configure user

# interaction with the RStudioServerPro app

rStudioServerProAppSettings:

# (Optional) Indicates whether the current user has access

# to the RStudioServerPro app

accessStatus: 'ENABLED'

# (Optional) The level of permissions that the user has

# within the RStudioServerPro app (default: User)

userGroup: 'R_STUDIO_ADMIN'

# (Optional) The security groups for the VPC that Studio uses

# for communication

securityGroups:

- 'sg-test-default-user-sg'

# (Optional) Specifies options for sharing SageMaker Studio

# notebooks

sharingSettings:

# (Optional) Whether to include the notebook cell output

# when sharing the notebook (default: Disabled)

notebookOutputOption: 'Allowed'

# (Optional) KMS encryption key ID used to encrypt the

# notebook cell output in S3

s3KmsKeyId: 'arn:{{partition}}:kms:{{region}}:{{account}}:key/test-sharing-key'

# (Optional) The S3 bucket used to store the shared

# notebook snapshots

s3OutputPath: 's3://test-sharing-bucket/notebooks/'

# (Optional) Studio web portal state (enum: ENABLED, DISABLED)

studioWebPortal: ENABLED

# (Optional) Lifecycle configurations for Studio apps

lifecycleConfigs:

# (Optional) Lifecycle config for the main Jupyter App. Runs

# each time the main Jupyter app container is launched.

jupyter:

# (Optional) Assets staged in S3, then copied to SageMaker

# container before lifecycle commands run. Available under

# $ASSETS_DIR/<asset_name>/

assets:

testing:

# Local file or directory path to deploy

sourcePath: ./testing_asset_dir

# (Optional) Glob patterns to exclude from asset

# packaging

exclude:

- '*.pyc'

- '__pycache__'

# Lifecycle commands to execute

cmds:

- echo "testing jupyter"

- sh $ASSETS_DIR/testing/test.sh

# (Optional) Kernel gateway app lifecycle config. Runs each

# time a kernel gateway container is launched.

kernel:

assets:

testing:

sourcePath: ./testing_asset_dir

cmds:

- echo "testing kernel"

- sh $ASSETS_DIR/testing/test.sh

# (Optional) JupyterLab lifecycle script (Studio Latest).

# Runs each time a JupyterLab app container is launched.

jupyterLab:

assets:

testing:

sourcePath: ./testing_asset_dir

cmds:

- echo "testing jupyterLab"

- sh $ASSETS_DIR/testing/test.sh

SSO Authentication Configuration

Demonstrates SSO auth mode with an existing security group and existing domain bucket. Choose this variant when your organization uses AWS IAM Identity Center (SSO) for federated user access to SageMaker Studio.

# Contents available via above link

# Sample config for the Data Science Team module with SSO authentication.

# Demonstrates SSO auth mode with an existing security group and existing

# domain bucket. Use this approach when integrating with AWS IAM Identity

# Center (SSO) for federated user access to SageMaker Studio.

#

# For IAM auth mode with full property coverage, see sample-config-comprehensive.yaml.

# Complete data science team infrastructure configuration with SSO auth.

team:

# See CONFIGURATION.md for role reference options (name, arn, id).

# Admin roles granted access to team resources including KMS keys,

# S3 buckets, and SageMaker resources.

dataAdminRoles:

- arn: 'arn:{{partition}}:iam::{{account}}:role/Admin'

# (Optional) Team member roles for accessing shared resources.

teamUserRoles:

- name: AWSReservedSSO_datascientist_abcdefg

# (Optional) Flag indicating the role should be resolved as

# an AWS SSO auto-generated role

sso: true

# Execution role for SageMaker workloads including training jobs,

# endpoints, and notebooks.

teamExecutionRole:

name: team-execution-role

# (Optional) SageMaker Studio domain configuration with SSO auth

# and existing security group / domain bucket.

studioDomainConfig:

# Authentication mode (enum: IAM, SSO)

authMode: SSO

# VPC ID for Studio domain deployment

# Often created by your VPC/networking stack.

# Example SSM: ssm:/path/to/vpc/id

vpcId: vpc-id

# Subnet IDs for Studio user applications

# Often created by your VPC/networking stack.

# Example SSM: ssm:/path/to/subnet/id

subnetIds:

- subnet-id

# (Optional) Existing security group ID. Use this instead of

# securityGroupIngress/securityGroupEgress when you have a

# pre-existing security group to attach to the Studio domain.

securityGroupId: sg-existing-sg-id

# (Optional) Domain bucket configuration for shared storage.

# Use this when you have a pre-existing S3 bucket for the

# domain instead of letting the module create one.

domainBucket:

# S3 bucket name to use as the domain bucket

domainBucketName: 'existing-domain-bucket'

# Role used to deploy lifecycle assets. Must be assumable by

# Lambda with write access to the domain bucket.

assetDeploymentRole:

name: asset-deployment-role

# (Optional) Named user profiles for Studio domain. Key is the

# user identifier: SSO User ID (SSO mode).

userProfiles:

sso-user-id:

# (Optional) The role from which the user will launch the

# user profile in Studio.

userRole:

name: AWSReservedSSO_datascientist_abcdefg

sso: true

# (Optional) Default user settings for Studio applications

defaultUserSettings:

# (Optional) Studio web portal state (enum: ENABLED, DISABLED)

studioWebPortal: DISABLED